The rise of digital media—especially social media—has been a blessing and a curse. On one hand, it allows us to easily connect with new and former friends. Now creating media isn’t just for the mainstream, large outlets; anyone can create their own media and have a shot at having their voice heard. On the other hand, it’s opened the floodgates for the rise of “fake news” and an unprecedented vulnerability to cyberbullying and harassment.

As one of the biggest social networks on the web with two billion members, Facebook homes the perfect examples of the highs and lows of media in the digital age. One of the common issues is content moderation; many users have been frustrated with its rules for enforcement, which have been completely secret until now. ProPublica recently published the findings after getting internal Facebook documents detailing company guidelines for content moderation.

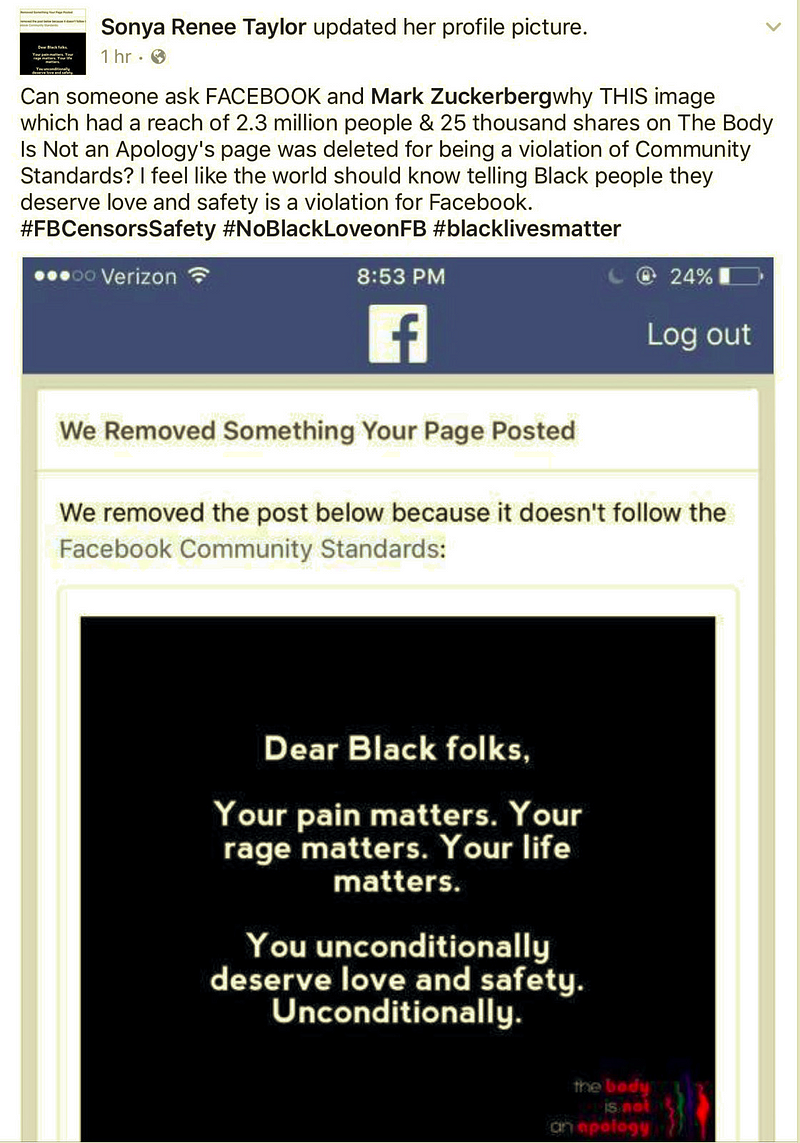

The current system isn’t working. My friends and I have personally experienced Facebook moderation gone wrong: posts calling us the n-word would be allowed to stay while pro-Black, anti-racism posts would get taken down. This seems related to the site’s weird system of “protected categories.”

The reason is that Facebook deletes curses, slurs, calls for violence and several other types of attacks only when they are directed at “protected categories”—based on race, sex, gender identity, religious affiliation, national origin, ethnicity, sexual orientation and serious disability/disease. It gives users broader latitude when they write about “subsets” of protected categories. White men are considered a group because both traits are protected, while female drivers and black children, like radicalized Muslims, are subsets, because one of their characteristics is not protected. (The exact rules are in the slide show below.)

That’s why Republican Rep. Clay Higgins from Louisiana was able to post something like this targeting “radicalized” Muslims. Facebook says the Higgins’ targeting a subset of members of the religion to be killed makes the post acceptable:

Meanwhile, posts like this don’t make the cut.

source: Sonya Renee Taylor/Facebook

source: Sonya Renee Taylor/Facebook

Another troubling aspect is Facebook’s responsiveness to public pressure, meaning that celebrities and other prominent folks like Donald Trump get special treatment.

The documents reviewed by ProPublica indicate, for example, that Donald Trump’s posts about his campaign proposal to ban Muslim immigration to the United States violated the company’s written policies against “calls for exclusion” of a protected group. As The Wall Street Journal reported last year, Facebook exempted Trump’s statements from its policies at the order of Mark Zuckerberg, the company’s founder and chief executive.

These issues highlight the importance of independent media. Facebook, while a big part of many people’s lives, is still a company that aims to make as much money as possible by getting (and keeping) a growing number of users. Getting on the bad side of powerful folks like Trump wouldn’t serve Facebook’s bottom line. And the company’s secretive, biased tactics show why scrutinizing these practices is so important. As a powerful (and arguably necessary) tool for digital organizing and community in the modern age, Facebook’s practices can have far-reaching consequences.