Up in Wisconsin, there’s more going on to undercut public education than Governor Walker’s budget cuts or the stalled-for-now union busting. There’s also the Value-Added Research Center. The wonderful folks at VARC think they’re on to something. If they’re right, they’ve stumbled onto the perfect way to show how good a teacher is from year to year. Once we know that, we can pay them what they’re worth. Treat them like professionals. It’s a laudable goal, right?

Except it isn’t. It’s a lie. VARC's work is a complex-looking bit of mathematical trickery wrapped up in demagoguery and based on a series of erroneous assumptions. In other words: a lie.

The VARC work is based on two assumptions: that the effect of teachers can be quantified and measured and that the effect is limited, negligible or non-existent. How can I say such a thing, you demand. Value-added metrics show the value that a teacher adds to the classroom. It’s even in the name.

It’s like this. The VARC folks use gardeners as an example. Each one has to be rated on how well their trees grow. (Never mind that this is a stupid way to rate gardeners. Or that kids aren’t trees.) Each tree is affected by four factors, only one of which is the gardener. By eliminating the effects of the other three, we are left with just how the gardener affected the growth of the tree.

For teachers, VARC assumes that the difference between the test scores from year to year is the result of a half-dozen factors that, in turn, are the result of dozens of other factors. Each additional factor means that the perceived effect of the teacher must be smaller. They even don’t understand the difference between a predictor (the student’s prior test scores, family member dropouts) and a cause (the teacher). Beyond the logical problems, the math is so flagrantly stupid at this point that I don’t want to go into it. Basically, the difference between two year’s test scores— minus all those other factors— is the effect of the teacher. It’s made to look complicated. It isn’t. It’s wrong.

But we need to know which teachers are effective, you whine. How else can we rate them? By not being fools.

You want to use test scores to rate teachers? Fine. The only reason for the VARC’s complex looking formulae is that they are trying to compare different years’ tests. But you didn’t learn or test on the same things third grade and fourth grade, so we have to correct for that and the effect of their previous teacher, and, and, and...

Let’s do this a bit smarter. Instead of using the previous year’s score, give the kids the final exam on the first day of class/school. Give the kids the same test at the end. Change the order of the questions or the answers if you want, but otherwise make it the same test. The difference in scores? That’s what the teacher did.

Sure there might be other things in there. Some students would study more. Some less. Someone might fail first semester and sign up for tutoring and dramatically improve their scores. But for the most part, who the students were at the beginning of the year is also who they’d be at the end of the year. They’re not going to be any richer, less black, more male and their prior test scores will be surprisingly the same. VARC says we have to control for (eliminate) those biases in the test score, but if both pre- and post-test are biased in precisely the same manner the biases cannot influence the observable trend.

Ah, but VARC claims that they’re using both growth and attainment measures. They aren’t. They’re measuring attainment, but for individual students instead of in the aggragate. But what if you want to take both growth and attainment into account? How about this:

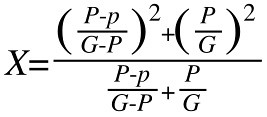

X equals a number from 0-1 recognizing both growth and attainment in proportion to the amount of growth and the amount of attainment where p is a pre-test score, P is a post-test score of an aligned test and G is the upper limit score. You want it in a percentage? Multiply X by 100. You want to use it to aggregate data across classes and teachers? Normalize the distribution.

But what about the rest of it? How are you going to mathematically rate a Chemistry teacher’s rapport with some of the school’s most difficult students? How about the English teacher that can make thugs cry while reading poetry? How about the History teacher that makes the students want to go out and read up on ancient Babylon outside of class? The art teacher that inspires a kid from a broken home to be the first from their family to go to college? How do you put a number on a teacher’s ability to comfort a child when a parent has died in front of them?

I suppose the point is this: You can come up with a way to measure the amount of learning. You can assume that teachers have a big effect or a small effect and make your results match your assumptions. But you cannot show the value of a teacher with just a number, not when there is so much more worthwhile that goes on at school than just learning.