I want to recount a moment in math history, of the great Alan Turing's 1936 contribution to computer science and mathematical logic -- a great, geeky triumph. I hope to share a glimpse of the elegance and beauty found in Turing's proof, being neither too technical nor too vague. I warn you, it's a nerdy topic from long ago, and I've added opinionated blather about history and math as a non-historian and non-mathematician; but I welcome correction and dissent in the comments.

Tell me, O Muse, of that ingenious hero, who thought long and hard on how Hilbert's question to destroy.

I suppose every century is full of upheaval, but the early twentieth might deserve some special infamy for destruction, disillusionment and angst, at least in Europe. We all know of the bellicose politics and volatile economies, but even in academe, mathematicians and scientists were shaken by new developments. In 1905, Einstein published an amazing quartet of papers that led to a critical rethinking of the characteristics of time, space, matter, and energy. Quantum mechanics emerged in this period, and made natural philosophers doubt the particularity of particles and the innocence of observation. Science of the late nineteenth century had been bursting with confidence, but it stumbled in the new century. Bertrand Russell and A. N. Whitehead composed Principia Mathematica, a monumental treatise on the logical foundations of mathematics, intended to serve as a bedrock on which all mathematical truth could rest. That hope was soon curdled by a young Austrian logician named Gödel. David Hilbert's optimistic motto (later, his epitaph) had been "We must know! We will know!" In 1900 he famously presented ten grand-challenge style unsolved mathematical problems to inspire a new generation of mathematical research. One of them evolved into what I call the Great Decision Problem, whose downfall this story tells. Even for the very privileged, it was a troubling time.

Into this turbulence, whilst the shock waves of the sinking Titanic were almost still rippling across the English main, Alan Mathison Turing was born. I'm devoting a separate diary to tell his story, and this is a companion piece to recount one of his great accomplishments. For now it may be enough to describe him as a fiercely independent thinker who was passionate about science, deeply intelligent, and often at odds with convention. Somehow he survived English public school and won a scholarship to Cambridge. First as an undergraduate, then as a Fellow, he studied mathematics both pure and applied. Influenced by a graduate seminar in 1935 on mathematical logic, he chose to tackle one of Hilbert's grand-challenge problems, the Great Decision Problem (more properly known as the Entscheidungsproblem).

This isn't Wikipedia, so I won't lay out all the details, but the idea of the Great Decision Problem was in the spirit of a challenge like this: Give a procedure to decide if a strictly logical claim is true or not. To be more specific, let's use the logic of arithmetic as an example. In that case, that task would be to produce an algorithm to judge whether any generalized arithmetic claim is true or false. I have in mind something along the lines of these three claims:

- "For all integers N greater than 2, the are no positive-integer solutions to the equation XN+YN=ZN."

- "For all integers N greater than 257, (2N - 1) is a prime number."

- "Every even integer greater than 2 can be expressed as the sum of two primes."

You could think of the above three statements as a short true-or-false quiz. (Care to give it a go?) Hilbert wanted a procedure that could perfectly ace such quizzes: a process to decide the truth (or not) of each such claim: a logical umpire. If we had such a procedure, it would eventually be able to tell you that #1 is true and #2 is blatantly false. Furthermore, it would also be able to deliver a correct verdict for #3 as well, which would be terrific, since no one even knows whether that one is true or false.

Hilbert stipulated that the decision procedure had to "cook from scratch," that is, it had to derive its answers from basic, agreed-upon facts (e.g., "n = n") called axioms. Hilbert in fact wanted more than this: he wasn't just interested in arithmetic claims. He wanted a process that would use any simple ("first-order") set of axioms, not necessarily arithmetic axioms. It should also work on, say, geometric axioms or any other first-order formalized symbolic system. (Actually, arithmetic is not perfectly first-order, but it's fairly close, so let's not speak of it further.)

Cooking from scratch, from raw axioms, means Hilbert wanted a kind of proof-generating algorithm, although he didn't use the word algorithm. At the time, no one had a solid definition of what an "algorithm" was, and the concept was practically nameless. He used the term "definite procedure." Interestingly, he didn't even seem to question the existence of such a procedure: "We must know! We will know!" He was sure there had to be a way.

Alan Turing, 23 years old, was resting in a meadow one day, and he intuited that Hilbert's optimism was misplaced: there was no way. He could prove it. Turing lived before the era of electronic computers, yet what he undertook was to mentally "invent" the programmable computer, and then prove things about its capabilities and (astonishingly) about its ultimate limitations. Specifically, he proved that any solution to Hilbert's Decision Problem lay beyond the outer limits of all that is computable. Let me tell you how.

Step 1. Invent the computer.

First, Turing had to give a precise definition of "algorithm" or "definite procedure," call it what you will. He dreamed up a kind of machine for computing. This is an entirely conceptual formulation, defined only on paper, and suitable only for thought-experiments. Just as a novelist writes about people she has never met, Turing wrote about a computer no one had ever seen. Today we call his concept "the Turing machine," despite its incorporeality.

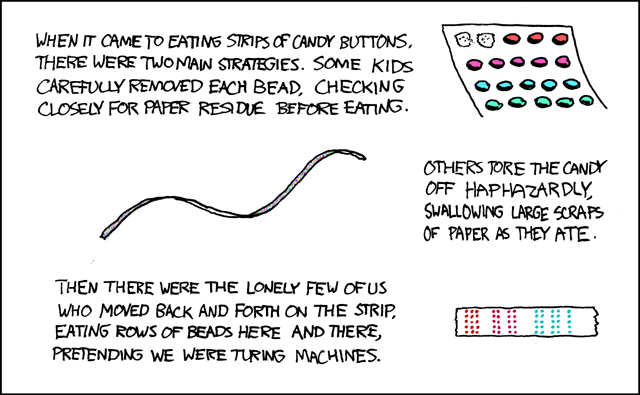

The Turing machine is very bare-bones; that is one of its strengths. If you can picture a reel-to-reel tape recorder, you're on the right track. There's a long tape that serves as the medium for memory, divided lengthwise into squares, like frames in a strip of 35mm film. Each square can hold a single character. The tape could start off blank, or with some contents -- if you are the one doing the thought-experiment, it's up to you. Its length is unlimited (infinite, if you prefer). The tape is threaded through a head that is sort of a feeble central processing unit, which can see one square of the tape at a time. It can read, write, and replace whatever character is in the current square, and it can shift the tape left or right by one square. The head also has a fixed table of instructions it must obey. The table could be lengthy, but anyway it's not modifiable. Again, if you're the one doing the thought experiment, you must specify what's in the table, but your options are limited. At each step the machine can interact with the tape as described, and jump to a different step in the table; or it can just halt if and when and it finishes. And that's it! Turing perceived (and argued at length) that this conceptually emaciated system has just enough sophistication to solve any computational problem.

For starters, with enough effort you could design a table of instructions that would work as a calculator, so that if you launched the machine with a tape marked "6X7=?" then the machine could chunk around for awhile and eventually halt with a tape that says "6X7=42." Or one could program up a related table of instructions that would work as an arithmetic-checker. Then if one launched it with a tape marked "6X7=63," the machine would finally halt with a tape that says "FALSE." In a different vein, you could easily mess up a table of instructions and produce a machine that just goes into an infinite loop, never halting. Writing working tables of instructions for tasks like these examples would be difficult, but hey, so what? So was the invention of the mechanical adding machine and the Jacquard loom. The important thing is, it would be possible. The amazing stuff is still to come.

Step 2. Invent virtualization.

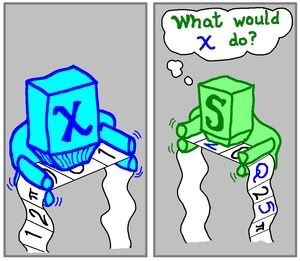

Turing showed his conceptual machine was powerful enough to simulate yet another Turing machine. That is, the table of any Turing machine X could be translated into symbols on a tape, and the input tape of X (whatever you like) could be interleaved onto the tape too. Then another Turing machine, S (for "simulator"), with a suitable table could decode the tape and simulate the behavior of X. If and when X halts, then S halts. Whatever is the output of X would likewise be the output of S. And of course X could be any machine -- it might even be simulating another machine W, and so on. But if it were turtles all the way down, it would run forever. Let's hope that at the innermost layer, there is a machine that eventually finishes and halts. Then the machine simulating it will halt, and so on up the line, like falling dominoes. Only then will machine S eventually halt. There are two related important points here: that you can build a simulator of another machine, and that you can "encode" the table of a machine into tape symbols, along with the input tape of the simulatee.

The idea of general-purpose computers was not new, but I think Turing was the first to take the idea of simulation and point out what a big deal it is. As The Matrix proved, you can take this concept of simulation and run with it, really run. We need to move on, but one last plot point here is that one isn't limited to a straight-up simulation: you can take S as a starting point and improvise, as we will see.

Step 3. Discover its limitations.

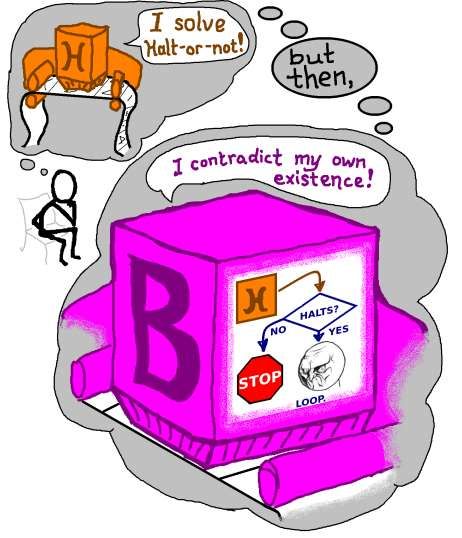

My favorite insight of Turing's was his deduction that no algorithm could ever perfectly predict whether Turing machines would halt. That is, there is no procedural way to give a guaranteed answer as to whether an arbitrary machine will ever finish -- even when you know its input tape and its whole table. I would call this the "Halt-Or-Not" Problem, and as I said, it is insoluble. If a Turing machine could solve it, it would look at an encoded machine/tape combo as input, and write as output on its tape "IT HALTS," or "IT NEVER HALTS." You probably already see why mere simulation won't cut it: if the machine to be simulated never halts, your faithful simulation would also run forever. Of course, that's what makes it a simulation. Turing not only proved that Halt-Or-Not is generally insoluble, his proof is a gem. To me it's better than any science fiction, because it's actually science fact, a day-trip through Dave Bowman's stargate.

Here is why Halt-Or-Not is an impossible task for a Turing machine: imagine, perversely, that there is a way to solve it, with Turing machine H. Then you could simulate H on a machine B (for bitter), which we make mostly like simulator S, but we will add a dash of bitterness and spite. A proper simulation of H always halts, yet we sabotage B so it deliberately does not halt whenever the simulation of H finishes with output, "IT HALTS." Whereas if the simulation of H finishes by saying "IT NEVER HALTS," then B acts like any other simulator, and also halts. The final step is to encode B on a tape and give that tape as input to B. What would B do? Would it halt, or not? Think about it for a moment. If B is refusing to halt, that means it is being "spiteful," which happens when H generates output "IT HALTS." Yet that answer means B does halt -- a contradiction! Now, if B does halt, that only can happen after H has produced the output "IT NEVER HALTS"; again a contradiction! We are backed into a corner, and there is only one way out: we must etch-a-sketch away our perverse assumption that H could even exist. The paradox is proof that it does not. And that is why there is no way to solve Halt-Or-Not in general.

I know the above was a knotty argument, but if you work through it, you might agree with me that it is also very beautiful, like a Bach fugue, circling back on itself. It is also an essential building block for theoreticians. Many Bothan robots' heads exploded to bring us this information.

Step 4. Finish the job.

We are ready to take on the Great Decision Problem, and the first step will be like what we just did above: we will brashly assume that there is an algorithm to solve it, in the form of Turing machine G. You would give it a tape containing a set of axioms and a claim, and it would kerchunk back and forth awhile, then halt with "TRUE" or "FALSE" written on the tape, as appropriate. The thing is, with G in hand, we could produce that decider H for Halt-Or-Not, which would be an outcome we've shown can never be. Let me explain why.

This will be a bit sketchy, since we're both tired by now. Let's imagine we are modern Dr. Frankensteins, building the forbidden machine H in our laboratory. Our creation H will use, as its "brain," the postulated machine G, the one we hypothesized could solve the Great Decision Problem. With our machine H we want to know whether machine X, reading some input tape T, will halt or not. The key fact here is that each step of a Turing machine computation is so darned primitive: a twiddle of the tape and a jump to the next step in the table. These primitive instructions can easily be translated into a set of first-order logical axioms. (Remember them?) Our H will need to look at X's table to translate its possible steps of computation, but that's no problem. Tape T also becomes an axiom, as the "initial truth." Finally, we can create a logical claim that captures the idea "X will halt." I'm leaving out the details, but I hope you can get a sense of the strategy here.

With a set of axioms and a logical claim, we simulate G, the Great Decider, and it does the really hard work. It decides whether X's computational possibilities (in the axioms) ever lead to a halting state (the claim). If G says TRUE, that means X halts; if G says FALSE, then X never halts. Behold! They said H couldn't be done, but if we had G as a resource, we could build the forbidden H and rule the galaxy!

Sadly, reality is not that plastic. We have proved H does not exist, yet G would enable its existence; therefore G cannot exist either. This kind of argument -- one solution enabling another -- is called a reduction, and I find them bewitchingly lovely.

And that, my exhausted readers, is one way we know Hilbert's Entsheidungsproblem was a hopeless dream. Unfortunately for Turing, his solution was not the first. Alonzo Church used a very different method to prove the same result, and published just when Turing readied his paper for submission. But I prefer Turing's approach, so original and full of style. The Turing machine and the Halting Problem now are canonical concepts in university computer science curricula everywhere, even as the Entscheidungsproblem has shuffled off to the footnotes of history.

Further reading

If you haven't had your fill yet, there are some really thorough popular treatments of computability found in Hofstadter's Godel, Escher and Bach: an Eternal Golden Braid and Penrose's The Emperor's New Mind, though both books are quite long and largely about other subjects. They examine the Turing machine as part of larger stories about (in Hofstadter's book) logic, recursion, and the emergent properties of formal systems; and (in Penrose's book) the mind and the laws of physics. If you're more in a hurry, you might prefer the excellent biography of Turing and his work, Alan Turing: the Enigma by Andrew Hodges.